The nurse waves me through without looking up. My grandmother is in the sunroom, same chair as always, her Halo resting against silver hair. The chip beneath her temple pulses with a soft theta rhythm — I can see it on my phone, synced to her care dashboard. Neural activity stable. Semantic coherence declining.

I’ve been monitoring her metrics for three years. Every visit, a little less signal. A little more noise.

“Hi, Grandma.” I pull up a chair, close enough that our Halos link automatically. My phone vibrates: Proximity pairing enabled. Shared semantic space active.

Hers is a medical unit. Mine is just a sleek consumer band that offloads its heavy processing to the phone in my pocket.

She looks at me with those searching eyes. “You remind me of someone.”

“I’m your granddaughter. Aera.”

“That’s a pretty name.” She smiles, distant and kind, the way she smiles at everyone now. “Did we know each other?”

Read more science fiction from Nature Futures

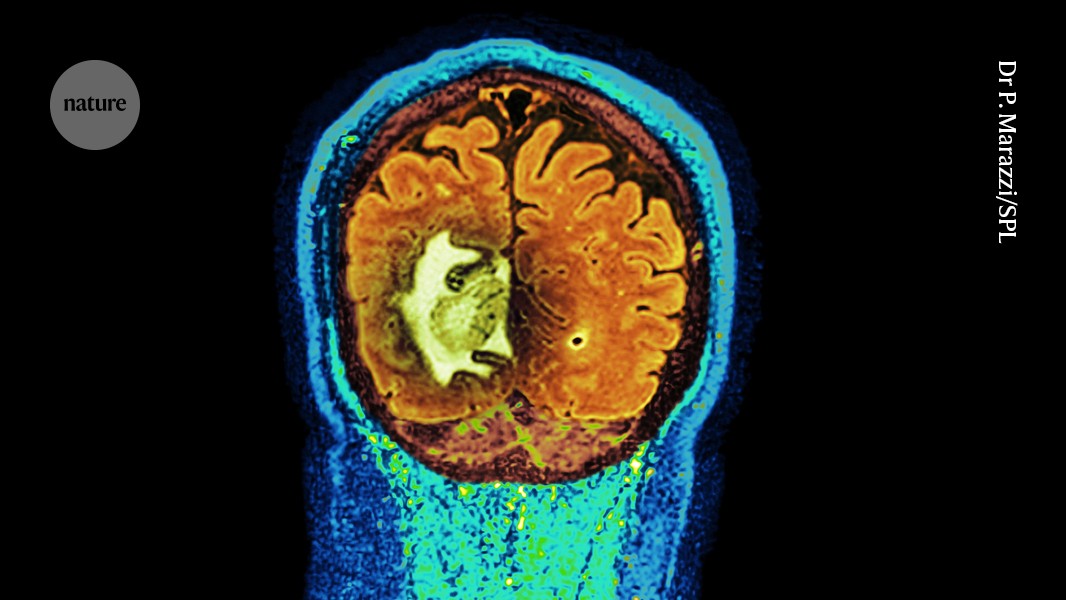

I want to say yes. Want to tell her about braiding hair, about songs while cooking, about the woman she was before the degradation carved through her hippocampus. But she’s asked me this every week for a year. The answer never sticks.

Instead, I check my phone. Her thought-vectors are fragmenting in real time — I can watch the embeddings scatter as she tries to place me. Granddaughter. Family. Important. The words are there, encoded in neural firing patterns, but they won’t connect to my face. To this moment. To anything solid.

“We did,” I say. “You taught me a lot.”

“I’m glad.” She squeezes my hand.

And my phone lights up.

Recognized gesture: affection, connection, reassurance. Predicted semantic match: 91%.

I stare at the screen. Predicted. Not observed. Not remembered. The model analysed the pressure of her fingers, compared it with billions of similar moments in the global data set, and decided this was probably affection.

Maybe she means it. Maybe Serebral is just filling in the gaps.

I don’t know which any more.

“Grandma, do you remember getting your chip?” I ask. “When you were 70?”

She frowns, and I watch her theta bands spike — the neural signature of effortful recall. The chip records it all, sends it to the cloud, adds it to the training corpus. Her struggle, made data.

“I think … they said it would help.”

“It was supposed to. Catch the Alzheimer’s early.” I hear the bitterness in my voice and feel my own Halo warm against my scalp — elevated stress markers, probably. Being recorded. “They implanted you too late. You were already sick.”

Her confusion deepens. I shouldn’t be doing this. Shouldn’t be upsetting her. But I need to know if anything I say reaches through the noise.

“If you’d had it at 60,” I continue, watching the metrics scatter on my phone, “they might have caught it. Started treatment sooner. Bought you time.”

“Time,” she echoes. “That would have been nice.”

My phone vibrates again. A notification I’ve seen before but never really looked at:

Based on your recent emotional patterns, you may benefit from: Cognitive Behavioural Therapy for Grief. Memory Care Planning Services: Support Groups for Family Members.

I stare at it. I haven’t searched for this. Haven’t mentioned grief to anyone. Just thought about it, here, holding my grandmother’s hand while she forgets me.

I scroll through my feed. Six more adverts, all targeted to this visit. To theta-band anxiety I haven’t consciously felt yet. To thoughts I haven’t finished thinking.

My Halo hums softly, recording. Optimizing my next thought before I have it.

“What’s wrong, dear?” my grandmother asks.

I open my mouth to answer, but I stop. Because I don’t know if what I’m about to say is mine. The words forming in my head — “I’m fine, just tired.” Are they genuine? Or are they what the model suggests people say in moments like this, aggregated from millions of similar conversations, compressed into semantic vectors and returned to me as if they were my own.