AI + ML

ICCL Enforce project offers Verity fact-checking server

You may find yourself living with a new tech stack. And you may find yourself in an unfamiliar world. And you may find yourself behind a keyboard and screen, with a mouse and inscrutable AI. And you may ask yourself, how do I vet this?

The Irish Council for Civil Liberties (ICCL) Enforce project has a suggestion: install its Verity MCP server.

“LLMs [large language models] confidently claim things that are manifestly untrue,” the advocacy organization explains. “Enforce has developed Verity, a tool that helps minimise false claims and fake sources from self-hosted LLMs.”

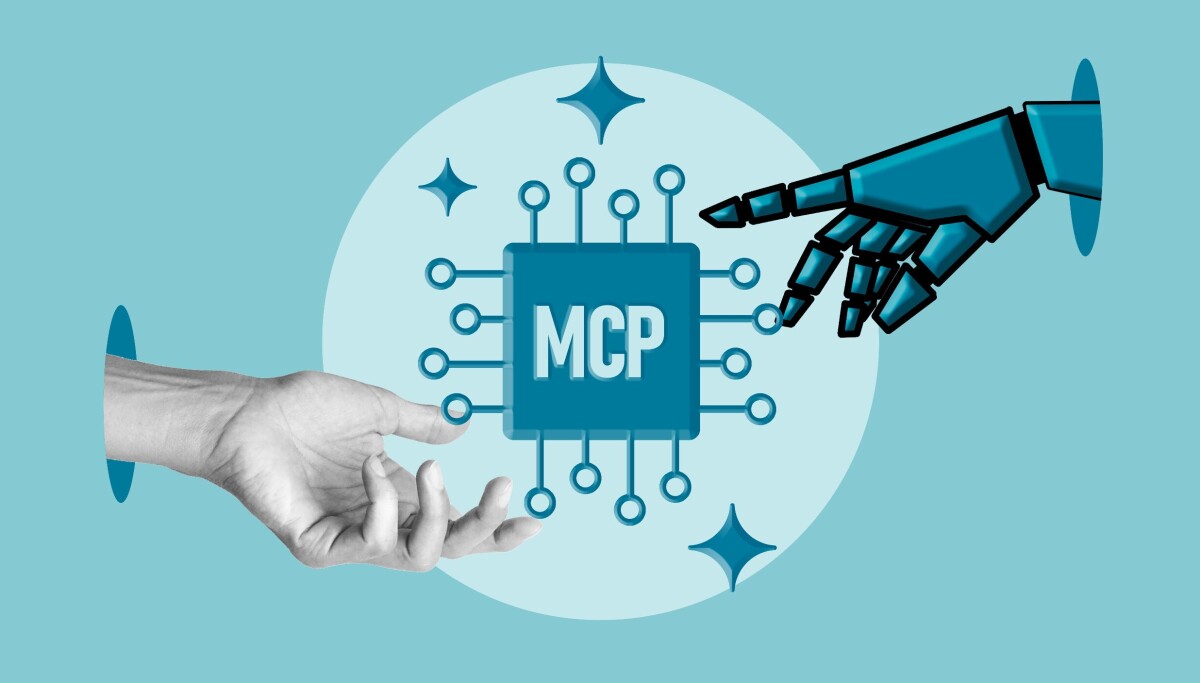

An MCP (Model Context Protocol) server provides AI models with access to external tools, data, and services.

The Verity MCP server offers access to a set of smaller models that will try to assess the accuracy of a primary local LLM, something more people have begun to explore in response to rising prices at cloud AI providers, availability issues, and privacy concerns.

Verity is not simply an LLM-as-judge setup in which one LLM evaluates the output of another. Rather, it’s a set of seven layers designed to review model output.

The system involves: strict rules for fact sourcing; a strong critic LLM that differs from the primary model family; a small critic LLM similar to the strong critic but from different training data; an encoder transformer trained on entailment labels; a regex evaluator; a stochastic re-sampler for catching low-confidence guesses; and a logprob analyser that checks token entropy.

The reference build assumes a 2021 PC, with an Nvidia RTX 5070 Ti (16 GB, 2025) for the primary model (Qwen 3.5 9B, Q4_K_M), and an AMD Radeon RX 5700 XT (8 GB, 2019) for the critic models (IBM Granite 3.2 8B & 2B, Q4_K_M).

The hardware recommendations call for a system with two GPUs, but that’s to allow concurrent delivery of a second opinion from the Verity checker. On a machine with one GPU, like a MacBook Pro or Mac mini, the system can be configured to evaluate the primary LLM’s output after the fact.

“Even the biggest LLMs inevitably produce false claims,” said Dr Johnny Ryan, director of ICCL Enforce, in an email to The Register. “This is a feature of how an LLM works. But this is dangerous when people start to put their faith in these systems. LLMs are being incorporated into judicial systems, public services, corporate life, the military, and people’s private decision making. For example, Google’s automated search summaries will confidently claim things that are not proven by the sources they cite.”

Ryan said if people intend to rely on LLMs for factual answers, they need a verification process.

“One beauty of running LLMs on your own hardware is that you can take steps against this,” he said, adding that local operation provides an opportunity to enlist old hardware to offer a second opinion alongside the main model output.

“So for example, when your machine is working on an answer to a question you have asked, you can have an old graphics card that independently produces a second opinion using a different LLM on the same machine, and the two can then debate at the end without significantly slowing down the process,” he explained.

The downside of an all-local approach is that models have a training cutoff and won’t be very useful for checking facts established after that date unless armed with tools for online data fetching. If you can get Verity up and running, that shouldn’t be a problem. ®