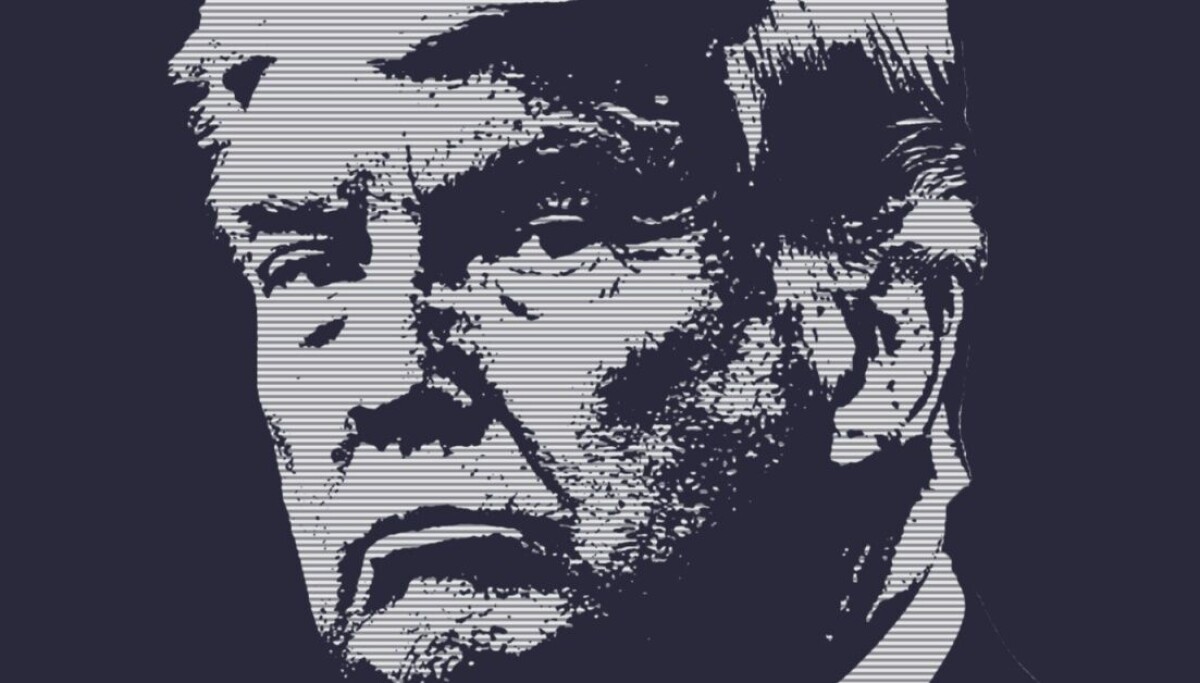

OPINION When President Donald Trump returned to power, he cast himself as the anti‑Biden on AI. First, he

tore up Biden’s Executive Order 14110, which had

demanded “safe, secure, and trustworthy” AI. He then replaced it with his own “Removing Barriers to American Leadership

in Artificial Intelligence” directive, ordering agencies to

rescind or dilute rules seen as obstacles to innovation.

In short, American AI vendors could do anything they wanted. That

was then. This is now.

While

Trump has yet to issue a new AI Executive Order, we know his crew is

forming an AI working group of tech execs and

government officials to bring oversight to AI. Specifically, they’re

considering requiring all new “high‑risk” AI frontier

models to undergo a formal government review before they can be used.

That’s going to go over well.

What

we do know is that National Economic Council Director Kevin Hassett

has said: “We’re studying possibly an executive order to give a

clear roadmap to everybody about how this is gonna go, and how future

AIs that also potentially create vulnerabilities should go through a

process so that they’re released into the wild after they’ve been

proven safe –

just like an FDA drug.”

Considering that people who ignore evidence now regulate healthcare in the United States, that doesn’t fill me with much confidence. Indeed, we

now know the FDA blocked

the publication of studies showing that COVID-19 and shingles

vaccines were safe. Are these the kinds of people we

want calling the shots on AI?

Be

that as it may, the Trump yes-men are framing this shift as a

response to escalating cybersecurity and national‑security

risks rather than as a broader embrace of EU‑style AI

regulation. Yes, they’re looking at Anthropic’s

Mythos and its potential use by hackers.

At

the same time, they emphasize that they want to avoid “onerous”

controls on everyday AI applications. Frontier models that could

supercharge cyberwarfare, bio‑threats, or other strategic

dangers are another matter.

That’s

quite a change from last summer when Trump babbled: “We have to

grow that [AI] baby and let that baby thrive. We can’t

stop it. We can’t stop it with politics. We can’t stop it with

foolish rules and even stupid rules.”

Now he seems to think rules would be a good thing. Darrell West,

a senior fellow at the Center for Technology Innovation at the

Brookings Institution, has suggested that Trump

is returning to Biden’s policy. Just don’t tell him

that; he’ll have a fit.

While

Trump and company are still contemplating exactly how they want to

rule – sorry, regulate – AI, the Department of Commerce’s Center for AI

Standards and Innovation (CAISI) announced new agreements with Google

DeepMind, Microsoft, and xAI. According to these new policy

statements, CAISI

will conduct pre-deployment evaluations and targeted

research to better assess frontier AI capabilities and advance the

state of AI security.

CAISI director Chris Fall said:

“Independent, rigorous measurement science is essential to

understanding frontier AI and its national security implications.”

How

to do this? Who will do this? What will it look like? Good question! Too bad we don’t have any answers yet.

You

may have noticed that Anthropic was not invited to this cozy policy

get-together. Funny, that, since most observers think that Mythos was

the model that broke the “do anything you want” AI camel’s

back in Trump’s White House.

That’s

because the months‑long feud

between the administration and Anthropic is still simmering.

Trump’s team moved to block federal agencies from using the

company’s tools, and Anthropic is now challenging that policy in

court.

Recently,

however, Trump’s tone has softened. Trump

told CNBC that Anthropic was “shaping up.” If he

can’t get peace with Iran, maybe peace with Anthropic will please

him. On the other hand, we also know that the Trumpies

are considering forbidding companies from “interfering” with the

government’s use of AI models. You hear that,

Anthropic? You will toe the line!

Meanwhile, Gregory Falco, a Cornell assistant professor of mechanical and

aerospace engineering, pointed out the obvious: “The federal government does not currently have the in-house technical expertise,

infrastructure, or day-to-day insight needed to directly evaluate

these systems on its own.” Expertise is

something Trump’s cast of characters sorely lacks across any and all

subjects.

“At

the same time,” Falco continued, “a purely voluntary model

of self-governance is not enough.” After all, foxes are notorious guardians of chicken houses.

What

I think is going to happen is that AI vendors who play ball with

Trump will end up “governing” AI alongside some Trump

loyalists. It’s going to be ugly. Some regulation is needed,

but these are not the people who will do a good job of it.

I won’t be surprised if one of Trump’s goals isn’t so much to make AI

safer as it is to ensure that the answers AI gives are the ones he

and his regime want people to see. Today, for example, when I asked a

variety of chatbots who lost the 2020 election, they all agreed Trump

had lost. Funnily enough, when the Senate Judiciary Committee asked numerous Trump nominees for federal judgeships the same question, they universally refused to say he lost.

For

better or worse, most Americans don’t pay attention to legal news.

What they do, however, is ask AI chatbots for answers. Foolish of

them, considering how inaccurate they can be, but there it is. If Trump’s

allowed to call the shots, I’ve little doubt that the approved bots

will follow in the footsteps of his obedient judges and give the

answers he wants and not the truth. ®